There have been local LLMs is gaining traction This is the last few years and I wanted to see what it was like to build a small building. independent installation for my projects, research and daily work. My gpt-oss20B model was more useful than expected, so I kept it in my rotations instead of throwing it away as a side project.

As strong as local LLMs are, they are they face a fundamental limitation: their knowledge is frozen during training, so they tend to make things up. They are good for general knowledge and structured tasks, but not very reliable for anything that depends on recent or current information.

But there is a solution to this – you can add search tools to your local LLM as MCP servers, allowing them to retrieve real-time data. Brave Search MCP was recommended to me and since I already use Brave Browser it was a good fit. Setting it up was quicker than I expected and it makes a real difference to the way my local LLM responds…

Brave Quest MCP

Allows local LLMs to access real-time web results

The Brave Search MCP is like a bridge between the native LLM (or whatever AI you build it with) and the Brave Search engine. It uses the Brave Search API to query the search index, and the MCP server handles communication so your model can feed fresh data directly into its responses.

The benefit is mainly to mix the model’s pre-processed data with current news, trends or updates – all managed locally and privately without depending on a cloud service. For anyone who occasionally gets frustrated with the trouble of fixing something when local models don’t have internet access, adding Brave Search via MCP closes that gap, putting you in control of the setup.

Setting up Brave Search MCP with my local model

It’s not as technical as I thought

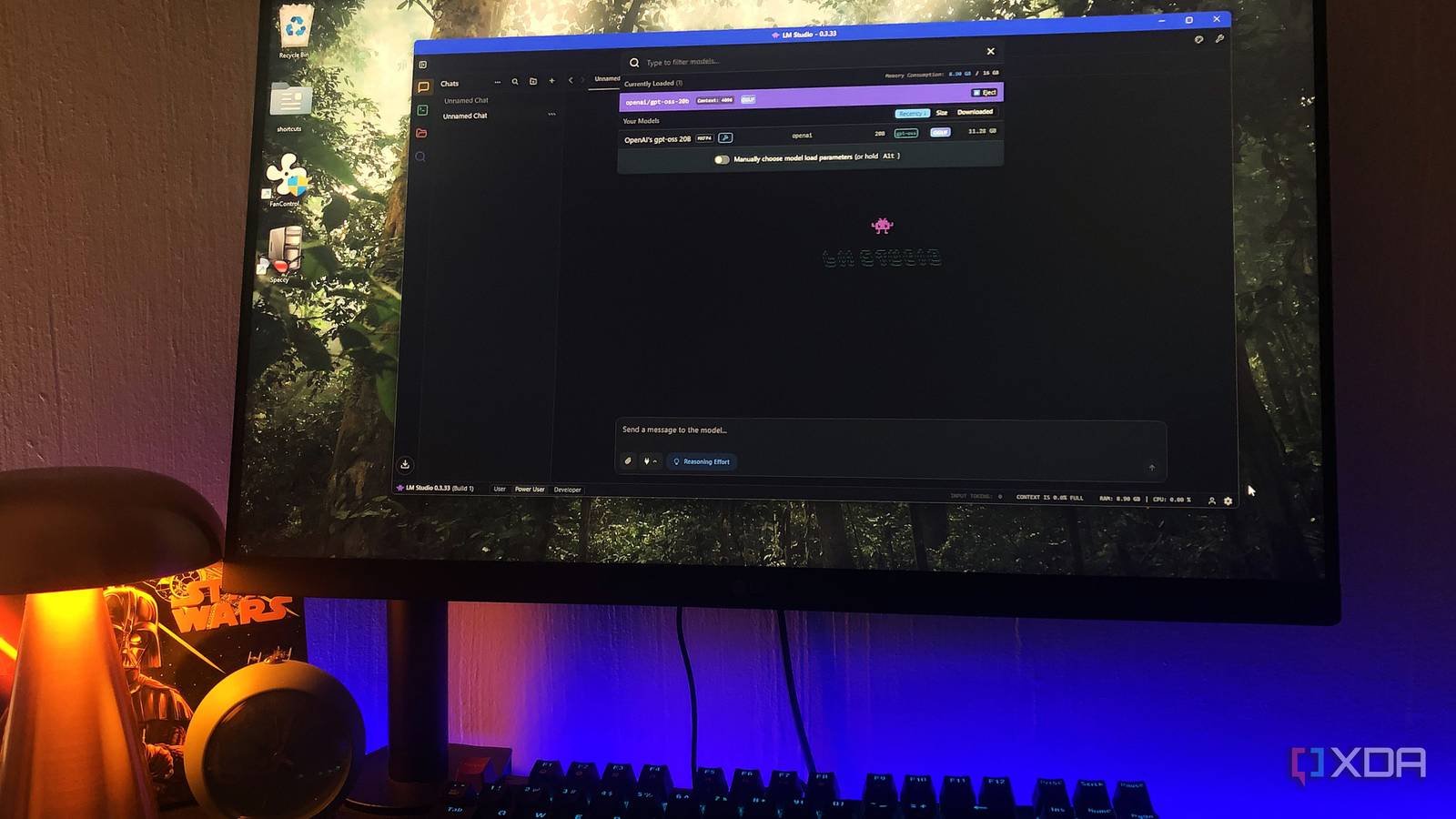

I’m not a very technical AI user – so I like working with it User-friendly GUIs like LM Studio. And this setup wasn’t as complicated as I expected. The first step was to register for a Bold Search API the key You have to create an account and upload your payment method – but Brave gives you $5 in free monthly credit, which is about 1,000 requests. So your card will not be charged for light to moderate usage. You can also set a limit to avoid exceeding this limit.

Once I had that, I opened my mcp.json file in a text editor – you’ll likely find it in your local LLM runner’s user configuration directory. And then I added this with my Brave API key:

{

"mcpServers": {

"brave-search": {

"command": "npx",

"args": (

"-y",

"@modelcontextprotocol/server-brave-search"

),

"env": {

"BRAVE_API_KEY": "PASTE YOUR API KEY HERE"

}

},"fetch": {

"command": "uvx",

"args": (

"mcp-server-fetch"

)

}

}

}

Also, make sure you have Node.js installed (comes with npx) as well highwhich the server will use to retrieve web results. The latter isn’t necessary for this to work, but it will actually give you more context beyond snippets of search results as it extracts the full content from web pages.

Then it’s time to run it in LM Studio. First, you’ll want to enable the MCP Brave Search and Fetch plugins. In my run, they were located at the bottom of the text bar. I had a problem when I first tried to use it, and simply restarting LM Studio was enough to fix it – you can also force restart plugins.

Last but not least: enter a system command that specifies your model to use plugins if necessary. LLM will decide when it needs fresh information, automatically call Brave Search and include the results in its responses. Something like “If you don’t know the answer, use the bold search tool to search the web.”

Using Brave Search on my local model

It was very unpleasant at first until I wrote better instructions

Once everything was set up, I expected Bold Search to work immediately and give me more accurate results. Instead, the first few prompts gave me really weird and more imprecise answers – it returned random bits of text with weird tags, or got stuck in tool call loops without completing the answer. What happened here was that the model knew she had access to the tool, but didn’t know how to use it cleanly. So instead of calling Brave and getting a result, it would either expose the raw tool call or keep trying the process over and over again.

Ironically, this happened when I specifically instructed my model to call the Brave phone. The solution was simple: using more natural cues. The first encouraging method I experimented with was asking him for information I knew wouldn’t be in the database, like “2026 design trends,” but without mentioning Brave. Started calling Brave right away and gave me fast and accurate results with quotes.

But you need to actually get useful answers give structure to your wishes and include everything you expect your model to achieve. This is the nature of working with on-premises LLMs, as they are a bit factual and don’t infer context as well as cloud models.

Another technique is to use freshness triggers; this includes “last, latest, currently, etc.” may include words like This can cause your model to call Brave. Limiting the scope also helps – asking for two or three results instead of “top 10” or “everything trending” helps avoid long tool calls.

I am improving my native model with a simple tool

Adding the Bold Search MCP to the local LLM didn’t make it a completely perfect system, but it did address one of its biggest weaknesses. Instead of relying purely on static knowledge and tweaking something every now and then, there is now a way to attract not only current data, but also more niche data that it never had to begin with. The installation itself was simple – a motivating experience that took a minute to figure out. Once I ironed out the instructions and found what worked, the results were consistent enough to trust.