The class of 2025 entered the worst entry-level job market in five years. Now, a growing number of them are using AI tools during live job interviews, and a cottage industry of startups is rushing to sell them the tools to do just that. Whether this constitutes delusion or common sense depends on which side of the recruiting table you sit on, but the numbers behind the trend are not in dispute.

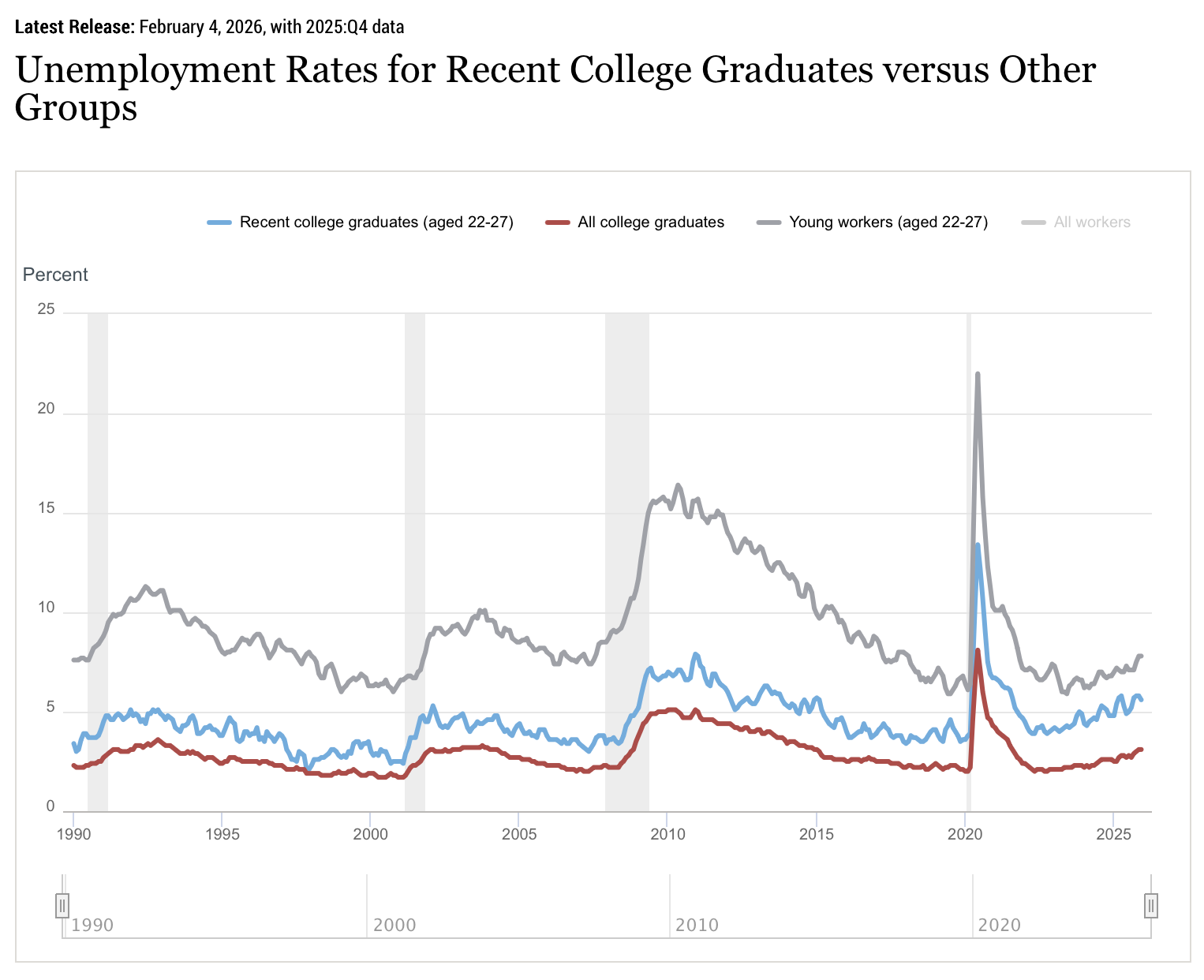

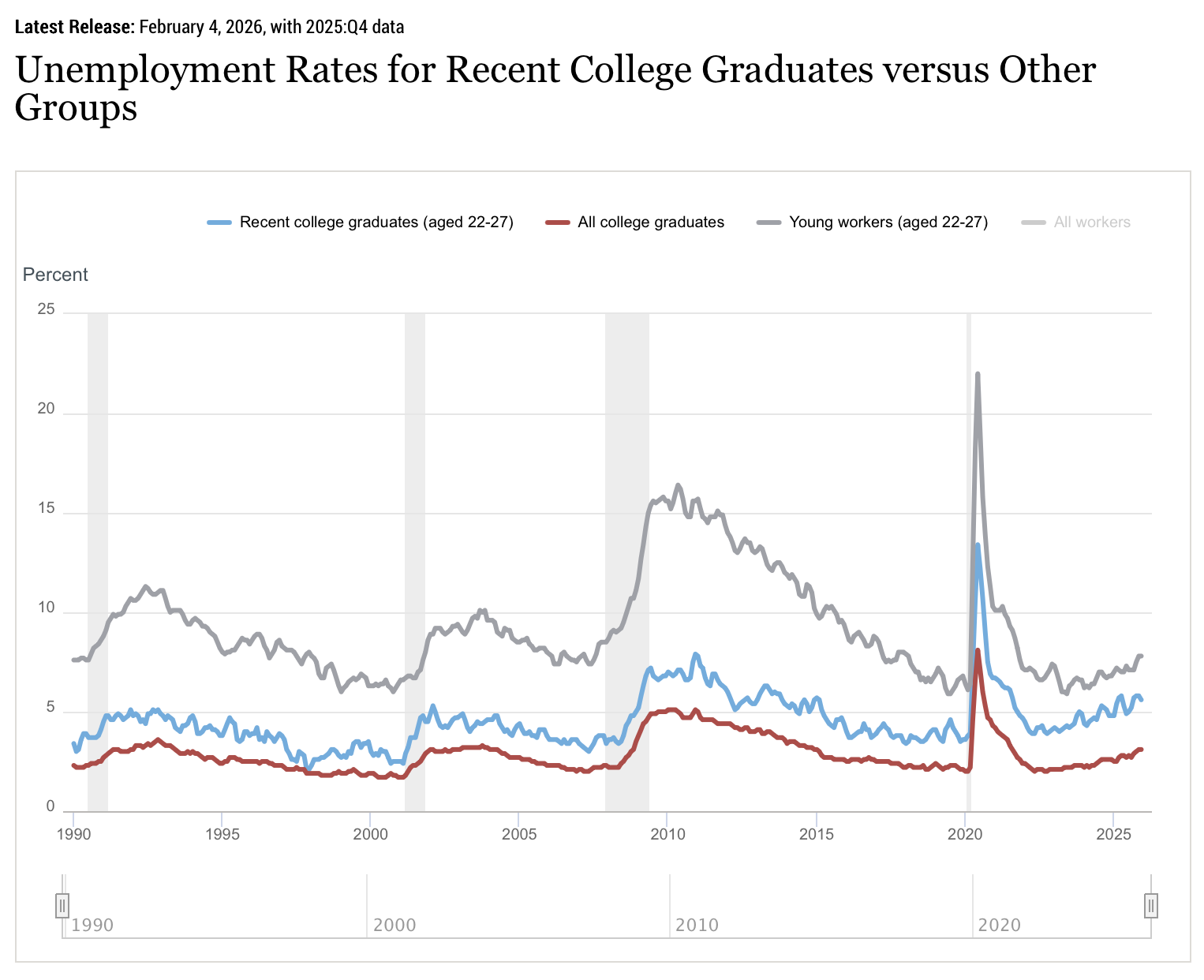

Unemployment among recent college graduates aged 22 to 27 has risen to 5.7 percent by the end of 2025. According to the Federal Reserve Bank of New York4.2 percent is well above the national average. The unemployment rate, which measures graduates working in jobs that don’t require a degree, was 42.5 percent, the highest level since 2020. Once the default destination for ambitious graduates, the tech sector has declined by approx. 245,000 jobs in 2025According to tracking data from Layoffs.fyi and TrueUp. Another 59,000 left in the first three months of 2026.

Graduates entering this market have made it I watched an older cohort get hired, promoted, and then fired At companies like Meta, Amazon and Google within 18 months. The lesson they learned was not subtle: competence and loyalty are not sufficient defenses. So they came armed with the technology their university had spent four years learning for them.

Tools and companies that sell them

The phenomenon emerged this week in a press release from LockedIn AI, a startup that sells a product called DUO: a service that combines real-time AI transcription of interview questions with a live human coach who can see a candidate’s screen and provide strategic guidance during the conversation. The press release, distributed via GlobeNewswire, is designed as a trend piece on intergenerational sustainability. It was more specifically a product advertisement.

LockedIn AI is not alone. Its founder, Kagehiro Mitsuyami, also co-founded Final Round AI, a similar product. Both companies have faced questions about the authenticity of their marketing: Reviews on Trustpilot are generated by artificial intelligence, and independent reviewers have noted that the app can be seen by interviewers when candidates switch between windows. A survey of 3,000 job seekers by Gartner found that six percent admitted to interview fraud, including having someone else impersonate them. 59 percent of hiring managers suspect that candidates are using AI to misrepresent themselves.

The market for these tools is growing precisely because the conditions that created them are not improving, but getting worse. National Association of Colleges and Employers found 45 percent of employers rated the job market for the class of 2026 as “fair” compared to “good” last year. Hiring projections for new graduates are essentially flat, with a 1.6 percent increase. For candidates who submit dozens of applications and receive interview invitations at rates below two percent, the temptation to take advantage of all available benefits is great.

Argument of hypocrisy

The most compelling argument for AI interviewing is not about fairness in the abstract. It’s about a certain inconsistency in how tech companies deal with artificial intelligence.

Google CEO Sundar Pichai has announced that it will exceed its revenue in April 2025. 30 percent of the company’s new code is now generated with the help of artificial intelligence25 percent six months ago. Amazon, Microsoft, and Meta encourage their engineers to use AI coding tools every day. Applicant tracking systems are powered by an AI screen and reject resumes before a human reads them. The recruitment pipeline is automated from end to end, except for the candidate side.

If university graduates are told that fluency in AI will define their careers, being asked to pretend the technology doesn’t exist during a 45-minute interview feels less like a test of competence and more like a test of aptitude. The companies that require them to do so are, in many cases, companies that will expect them to use AI tools from day one.

This argument has real force, but it also has its limits. There’s a difference between using AI to write code more efficiently and using AI to answer questions about your own experience, judgment, and problem-solving abilities. An interview, at least in theory, is a conversation designed to assess what a candidate knows and thinks. Giving these answers to a language model or a human coach whispering through an earpiece defeats the purpose of the exercise, no matter how unfair the exercise.

Employer’s response

Companies are already adapting. In-person interview rounds increase from 24 percent in 2022 to 38 percent in 2025according to recruitment industry data. Seventy-two percent of recruiting leaders now conduct at least one in-person phase to combat AI-powered fraud. Some firms have moved to whiteboard exercises, pair programming sessions, and unstructured conversations that are more difficult to reinforce with real-time tools.

The deeper question is whether the interview itself is the right mechanism for evaluating candidates in an AI-saturated job market. If the goal is to assess what the candidate can produce with the tools they will actually use on the job, it makes no sense to ban those tools during the assessment. If the goal is to assess raw cognitive ability and domain knowledge, AI assistance completely defeats the purpose. Most interviews try to do both, so the current system doesn’t satisfy anyone.

What is clear is that the class of 2025 did not create this problem. They inherited a pandemic-era glut of hiring, aggressive cost-cutting and a restructuring job market. The AI revolution it simultaneously creates and destroys opportunity at a pace that neither employers nor candidates have fully mastered. Their decision to use AI in interviews is not a rebellion. The predictable behavior of rational actors in the system has told them time and again and in every context that AI is not optional. The fact that the system now objects to their taking this message seriously is at least worth investigating.