Megan Ellis / Android Authority

TL;DR

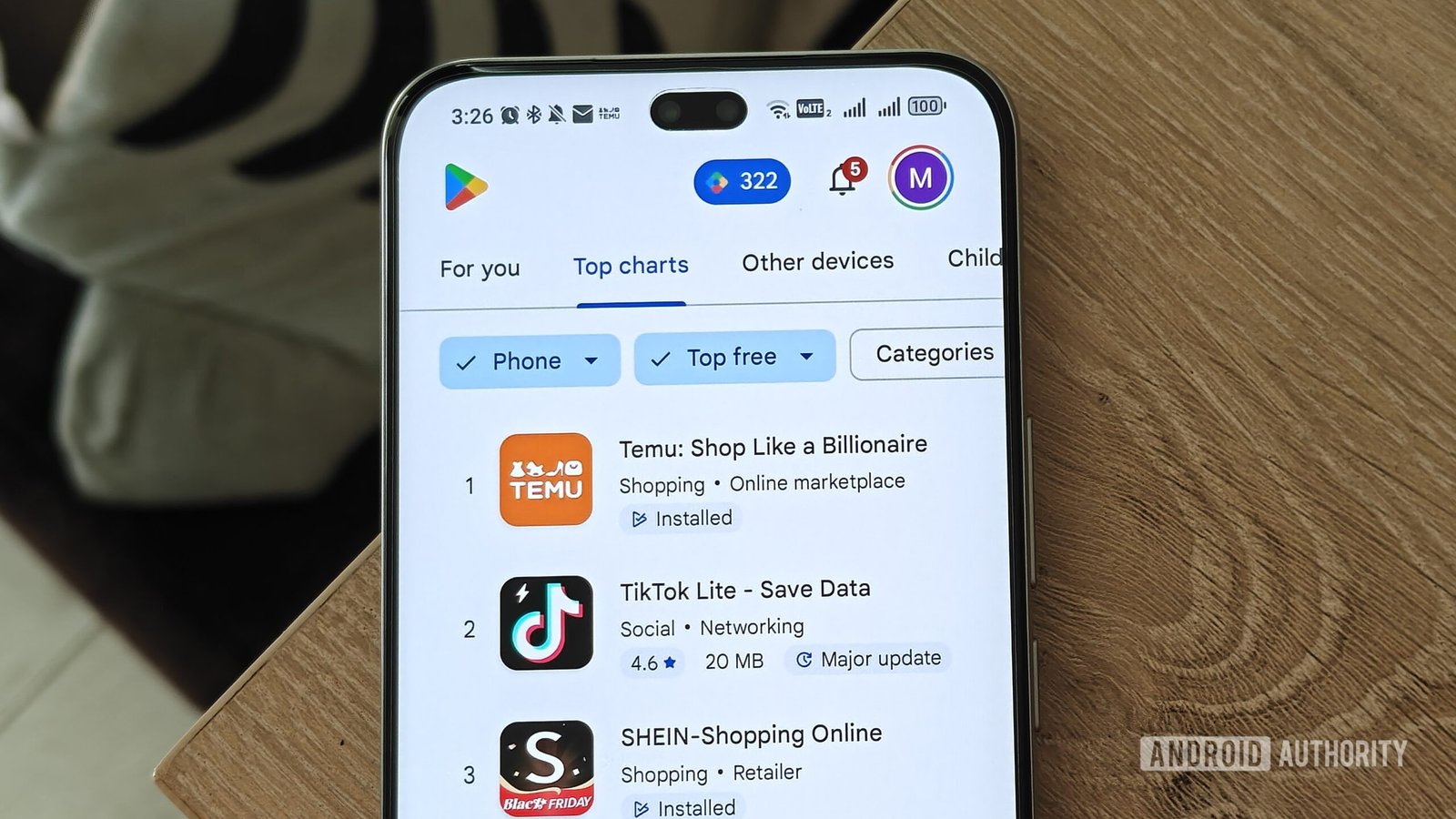

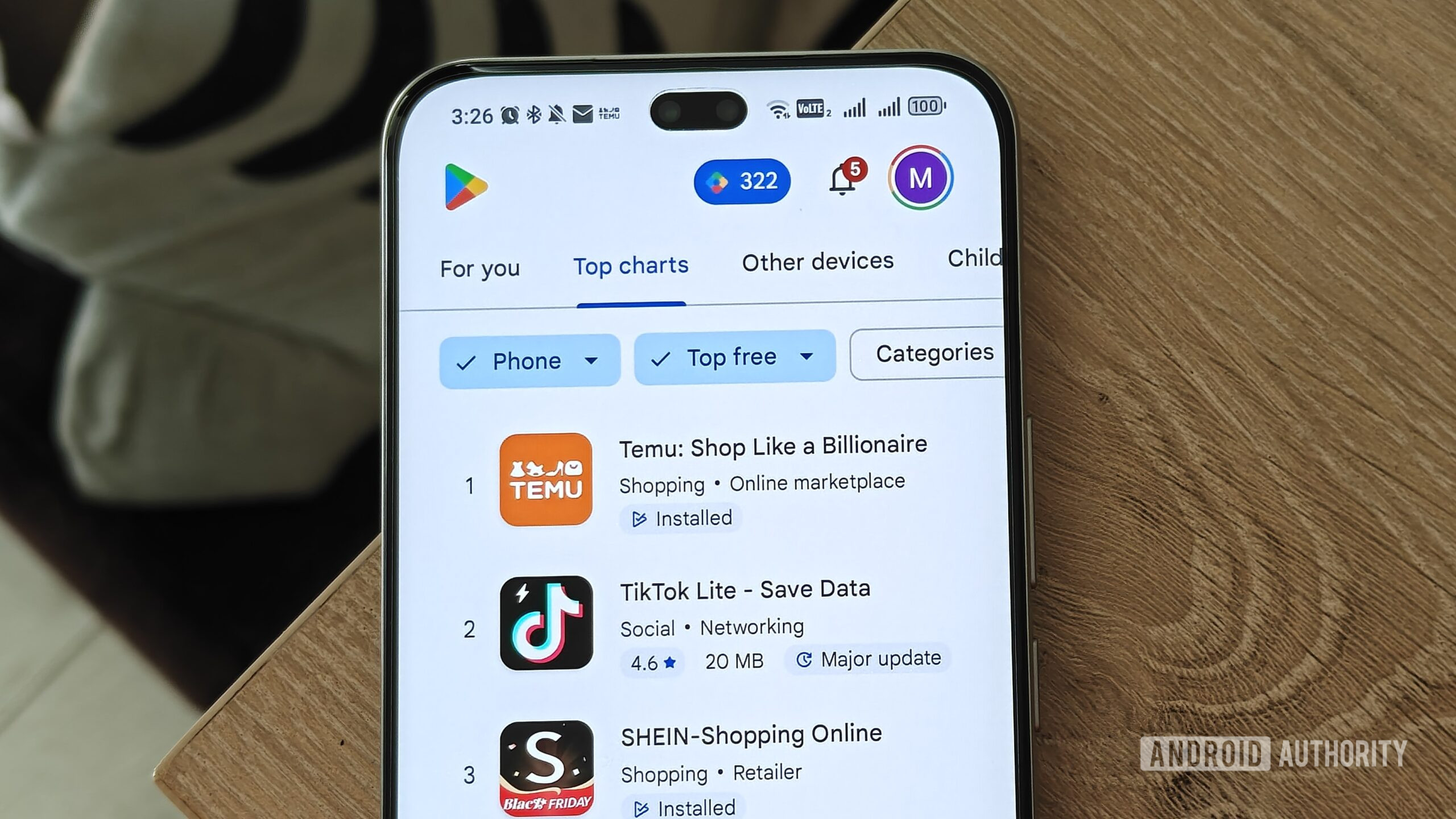

- The report claims that Apple and Google host numerous apps in their app stores that use artificial intelligence to create fake nude photos.

- Google has already responded to the report.

- The company says it “does not allow sexually explicit applications” and that “the investigation and enforcement process is ongoing.”

Artificial intelligence technology has been developing rapidly over the years. Unfortunately, the problem of artificial intelligence-generated nude pictures is spreading just as fast. Although Apple and Google claim hack malware which allows final report found that their app stores contained numerous “skinny” apps. Google has now offered a response to the report.

As a quick update, a previous report noted that Apple and Google’s policies clearly restrict apps that encourage exploitation or abuse. However, when you search for terms like “nude” or “strip” in the App Store or Google Play, you’ll find dozens of these exploitative apps. Both marketplaces reportedly even advertise these tools and offer them through autocomplete. More worryingly, some are rated E for Everyone, so even kids can legally download them.

In turn, Google says Android Authority that takes appropriate measures and investigates the issue. A company spokesperson says:

Google Play does not allow apps with sexual content. When we are notified of a violation of our policies, we investigate and take appropriate action. Many of the apps referenced in this report have been suspended from Google Play for violating our policies. Our investigation and enforcement process is ongoing.

It seems that “Apple” has also started to take measures. The Cupertino-based firm also responded to an earlier report Bloomberg It said it uninstalled 15 apps. For reference, the report found 20 nudify apps in the Play Store and 18 in the App Store.

Thank you for being a part of our community. Read our Comment Policy before deployment.