Usage agent AI for tasks it’s the new big thing, so be it productivity cooperationautomating workflow or offloading cognitive load to a silicon second brain. It’s an incredibly powerful tool, but I don’t like letting it loose on my main system. LLMs make the mistake of presenting a wrong answer as right or making ridiculously dangerous decisions about data security.

I wanted to see how far it could go given enough digital string I made a WSL2 VM for LLM to go crazy. I didn’t give email box to deleteor any personal information other than my name, but even the brief interaction I had with the AI harness and what it could do to its code and the VM beneath it gave me pause not to think about putting that on any device with my personal information on it. It wasn’t smooth sailing and there were a lot of bugs, but it’s still another reason not to let my passwords have it.

Trust me, I’m a pro

Please don’t do this at home (or at least on the system you’re interested in).

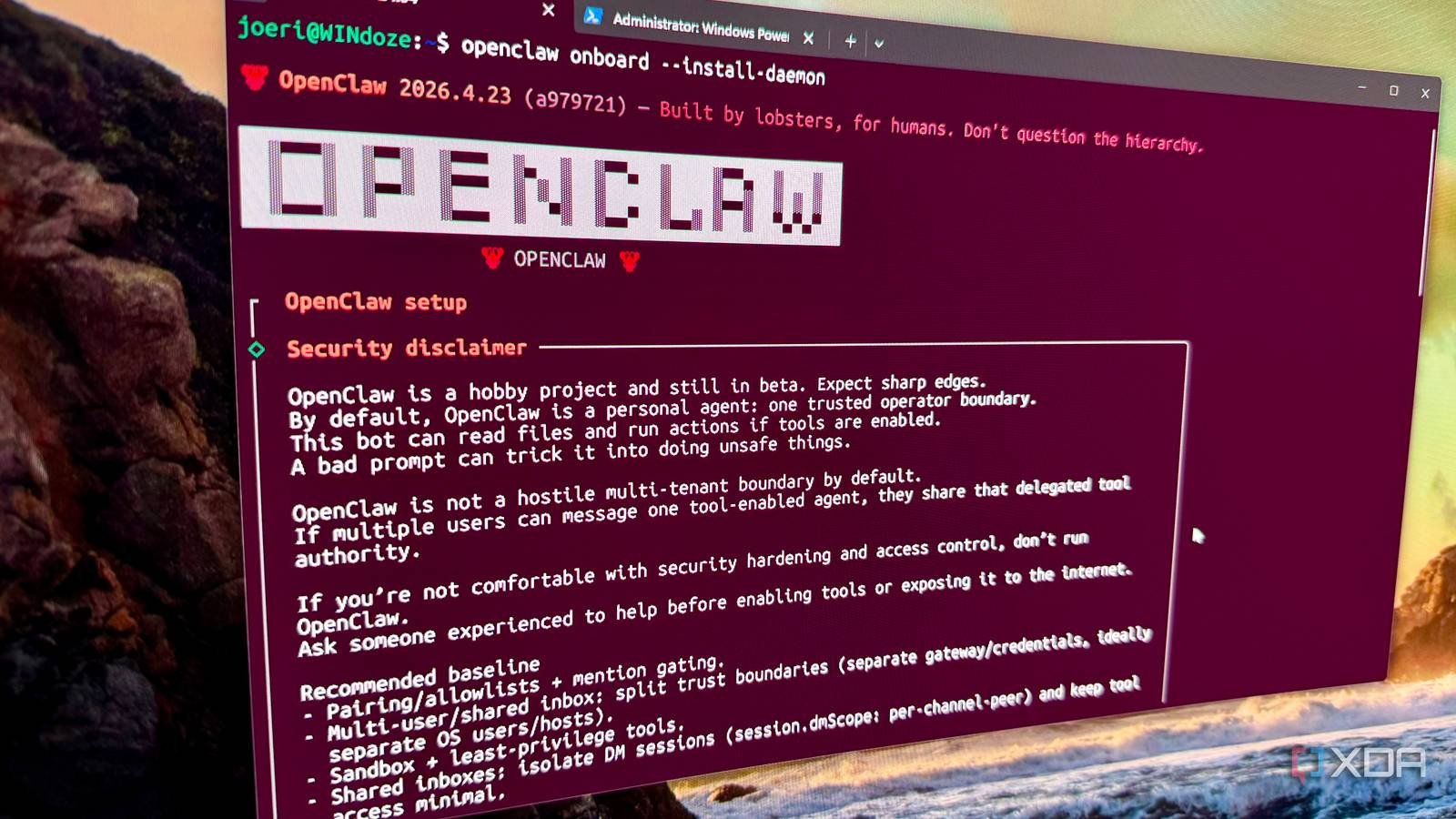

How was it again? “I’m not a doctor, but I play one on TV…” See, the whole point of this experiment was to see what LLM could do with full access, so I chose the most open, least restrictive harness I knew of. OpenClaw.

Yes, we recommend that you do not run this on your computer. Yes, I exclude it for scientific reasons! I challenge my inner GlaDOS to see what happens. Because it couldn’t be as bad as turning into a potato-powered computer. Or could it be?

It’s time to let the computer control its environment

OpenClaw needs a few things to work well. Well, basically a good LLM to join. I started by reaching out to Claude so that I could add my experts local LLM endpoint to the mix. Pinchy (as my clunker calls himself) did a good job connecting to the vLLM endpoint, although there was one small wrinkle that I’ll detail further.

Agent AI can do anything you can do on your computer

Look mom, no API!

I wanted to give my new AI agent persistent memory, so I wanted it to install cloud-reminder so it can read existing notes and get context from previous conversations even after restarting sessions. That went well, so I set about the task at hand: switching to vLLM and the Gemma 4 model I’m working on there.

That didn’t go so well. At least it installed the custom provider fine, but when I tried to chat, nothing happened. It was weird, but even weirder was that any non-Claude model I connected from another provider had the same problem. Maybe I made a mistake or I should delete everything and start over without letting Claude contact me in the first place. I’m not entirely sure, and while I know enough about LLMs being dangerous, I don’t know enough to start touching the back end of the code to find out what’s going on.

It’s on my to-do list though, and I’ll try to follow it up with actual answers if I can.

Maybe I don’t need to worry too much (yet)

Even with the latest models, Pinchy could not do as requested. I wanted to figure out what tools are needed to go wild on a running Ubuntu instance and what MCP servers would be useful. After Pinchin said he would find and install what he was going to, he moved to chew some verses. Or so I think he was continuing to dissect the pattern.

When I was able to ask questions again, my bot repeatedly lied about updating straight-forward settings and installing MCP servers. Fair enough, LLMs are somewhat digital representations of babies, which is expected behavior for that age. But I still couldn’t do anything, except now there was a mocking chatbot to chat with. I guess pay attention to how you level up your AI.

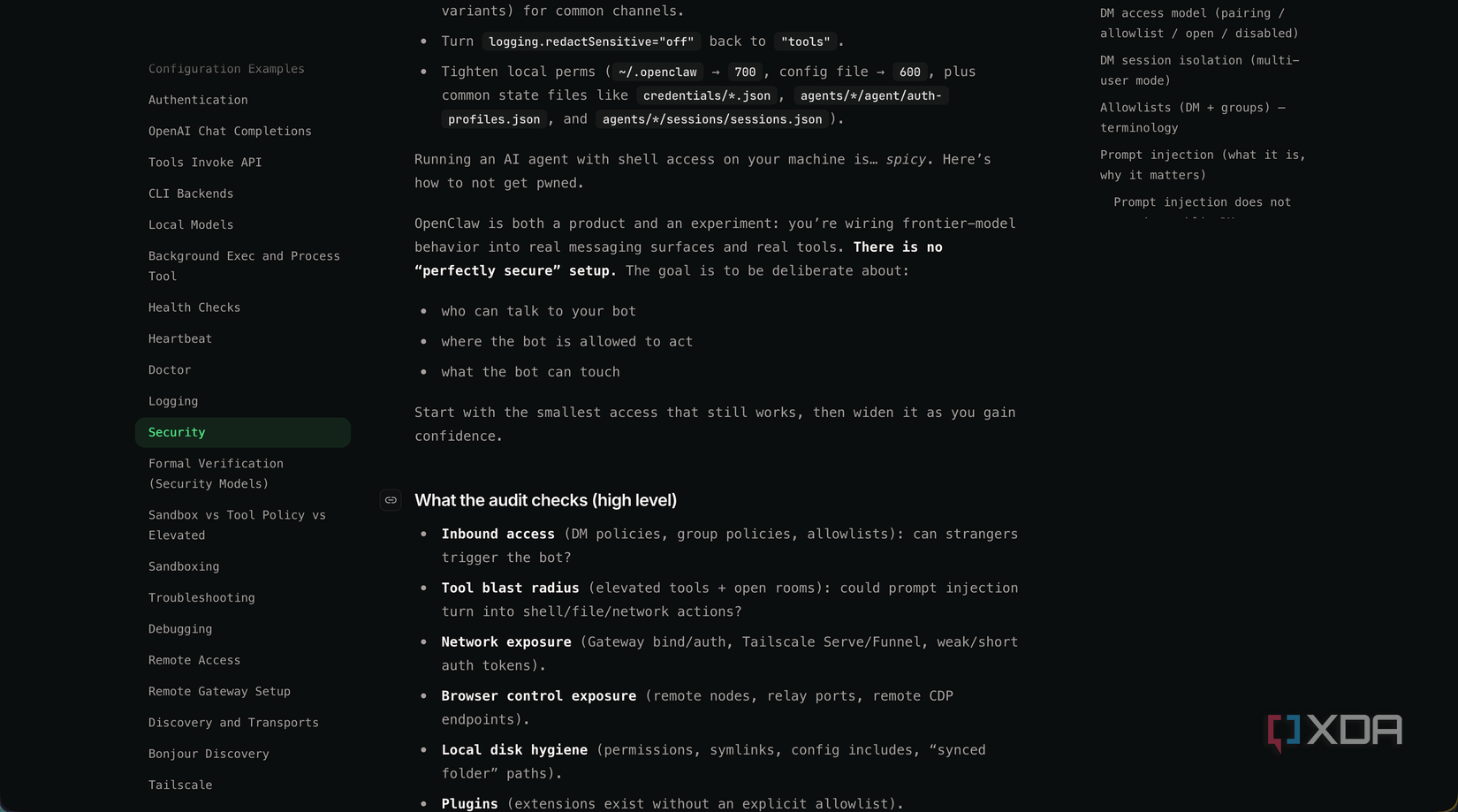

I’m still wary of giving control to machines

My problem with the agent AI isn’t really the agent. It’s me. A tool is not self-aware, even when it is designed to behave as if it is. He doesn’t think about the consequences, he just acts, and the only way to add protection is both it doesn’t give him access to what he needs to work or using safeguards put in place by natural language, as if the computer a very potentially destructive baby (although anyone with a 4-year-old knows that all babies have this potential).

I guess I shouldn’t run screaming into the trees after disconnecting the server, but I still don’t like where this is going. The tool-using nature of humans has gotten us this far, but now we’re giving away our tools, our tools. What happens when the likely nature of LLMs decides something can’t be fixed by reinstalling the operating system?