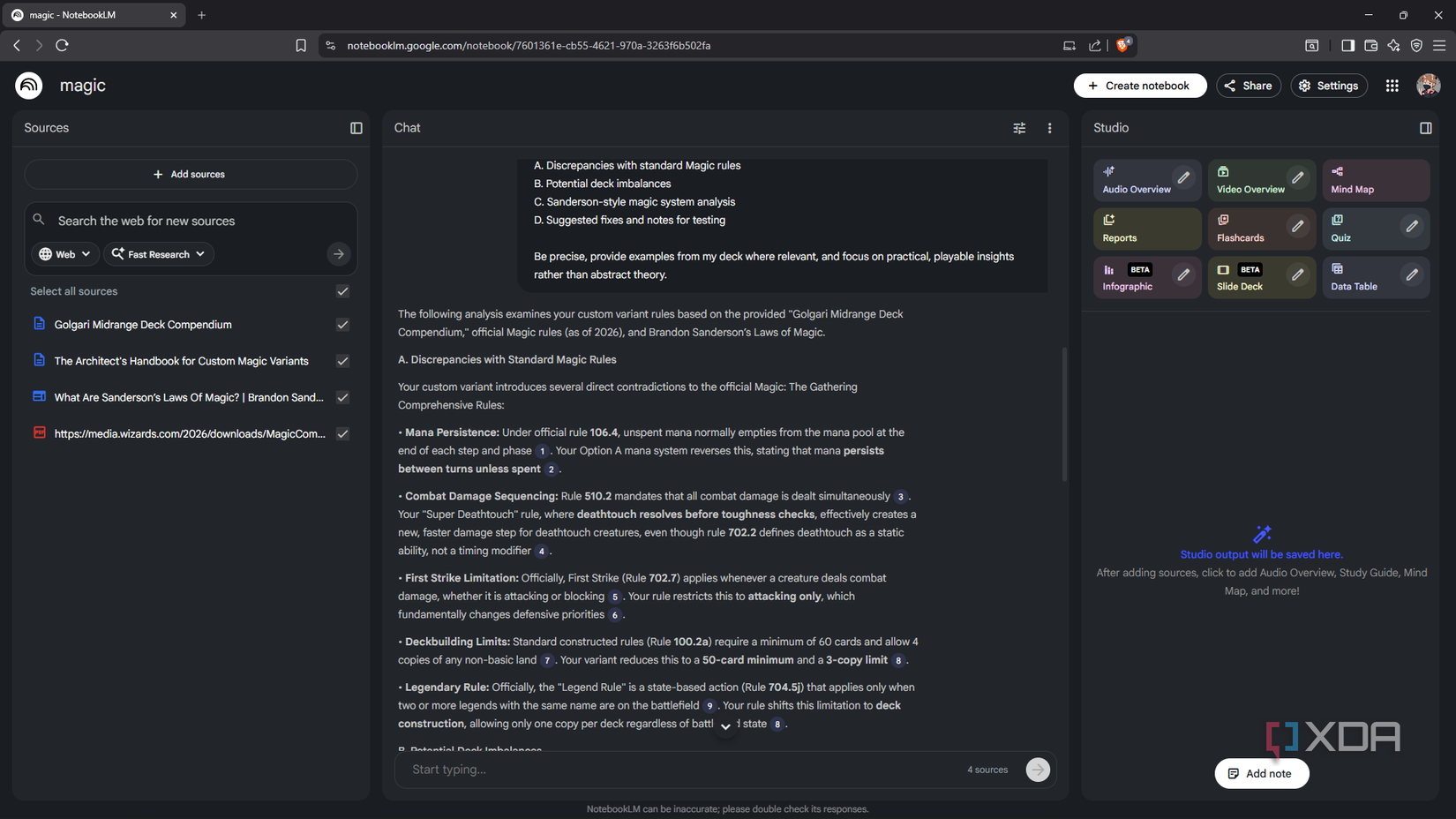

NotebookLM is indeed it’s one of the best AI tools I’ve usedand I’ve worked long enough to have a real opinion on it. The way you stay grounded in your sources, the citation behavior, the interactive mind maps are some of the most useful features in my workflow – nothing else does it to the same degree, and it’s free, which is always important.

The thing is, it’s a Google product. Your files go to their servers, are processed by their infrastructure, and sit in your account until you delete them. Google makes it very clear that your content doesn’t train their models – and as far as I can tell, they mean it – but that’s not the same as files that don’t exist anywhere on Google’s servers at all. It’s perfectly fine for most jobs. When it’s not good, the documents are private.

Why give up on NotebookLM when it’s so good?

When cloud AI stops being the right call

According to Google’s own documentation, NotebookLM won’t use the resources you download to directly train its underlying models – interactions, including your content, can be reviewed unless you provide feedback. Your requests have not been saved. However, downloaded materials, created speeches and chat history are stored for as long as the notebook exists. Usage metadata – how often you access the tool, what features you use – is subject to standard Google product terms. And the practical reality is that your documents are processed server-side by Google’s infrastructure. This is exactly how the product works for a personal Google account.

I recently had a health test and received a detailed report with a lot of information, some of it I didn’t know how to interpret, some of it was too much to read. Naturally, I wanted to scan it and get a better look at what was going on. However, when I tried to upload it to NotebookLM, I got a bit of a break; Before this happened, I had watched some Reels on data privacy and couldn’t shake the feeling of anxiety that all of my health information would be out of my hands. So I ended up at a local LLM instead. Privacy is one of the main reasons I install one anyway.

How my local installation handles files

And three ways to get information

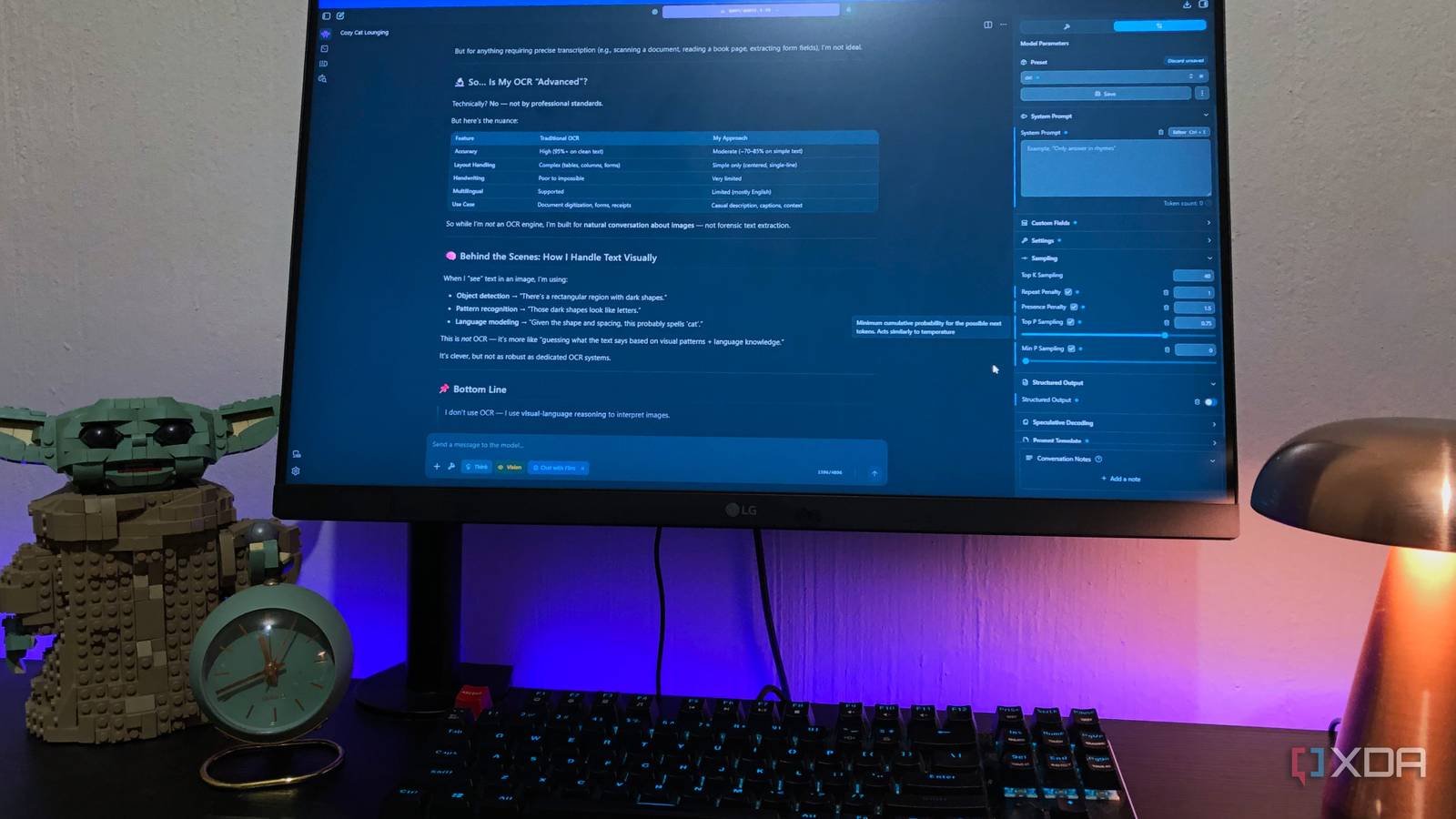

LM Studio has built-in document support since version 0.3.0, released in mid-2024, and its handling is quite acceptable. Add a file to a conversation and it first checks if the document fits in the model’s active context window. If this is the case, the whole thing goes directly into the query – no search is actually done, just the full content is fed to the model at once. If the document is too long, it goes to RAG: the document is broken up, each segment is included, and when you send a query, it pulls the most semantically relevant chunks and puts them in the query. The model responds based on everything that is available.

Of course, a different experience than NotebookLM is that the model still has all the training data. NotebookLM is source-based with a design that is one feature and the whole point. But sometimes you don’t want that border. When I was working on my genetics report, I really didn’t want the model to repeat what was written. I wanted him to relate these values to the clinical context, explain what the marker usually means outside of the document, or draw reference ranges he already knew. This achievement requires a model with its own knowledge. And with me Added Brave Quest MCPwhen something needs to be current, he can pull it from the Internet in the middle of a conversation. So my document has RAG, model training knowledge and live web access in the same session – without hitting a tool anywhere.

The the model i am running is Qwen 3.5 9BIt decreased in early March 2026. The reason it works well on an 8GB GPU is the architecture – Qwen 3.5 uses Gated Delta Networks (GDN), which keeps the KV cache footprint significantly smaller than most models at this size, so I can increase the context length above the default in LM Studio without immediately hitting a wall. When it comes to requirements, on-premises models respond better to open-ended guidelines than cloud models. They also don’t infer from context, so “parse the following document and note any values outside the typical reference ranges, explaining each in plain language” will trump a vague question every time.

An honest case for keeping NotebookLM around

There are no exchanges that I can’t claim

NotebookLM runs on Gemini with a context window that can store up to one million tokens per source – about 750,000 words, the equivalent of several very long books loaded simultaneously and stored in memory at once. By comparison, LM Studio allows you to upload up to five files at once with a combined size of just 30MB. Even with extending the context length and reducing the GDN memory load, I’m running at a fraction of NotebookLM’s ceiling, and fragmentation takes over when documents get longer.

RAG decomposition works by scoring document segments against your query and uncovering the most relevant ones – that’s fine until you happen to live in a section that doesn’t perform well against the specific words you’re using. NotebookLM mostly avoids this because it takes up so much space in the context at the same time that search misses are less common. For very long documents, such as lab results combined in one file, a long-term contract, or a complete medical history, NotebookLM is the more reliable tool (contextually speaking), and I wouldn’t argue otherwise.

If you prefer to stay local even for longer documents, it’s most practical to split them before you start. Feed sections, not the whole file, ask targeted questions for each section. you can use a A stand-alone tool like OmniTools the workflow for this still remains local.

Some documents apply to your machine

NotebookLM isn’t going anywhere for me – I still use it constantly for research, work papers, reading piles, anything else where cloud storage isn’t a concern. But some of the papers are not what I want to Google and the local LLM turned out better than I expected. RAG isn’t perfect and the context ceiling is real, but to me it’s not a deal breaker – it’s just a different tool for a different type of document.