In short: Anthropic has agreed to access roughly 3.5 gigawatts of next-generation Google TPU computing power through Broadcom starting in 2027, its largest infrastructure commitment to date — while announcing that its revenue momentum has surpassed $30 billion, more than tripling from roughly $920 billion.

Anthropic announced it is securing multiple gigawatts of next-generation computing capacity through a new deal with Google and Broadcom, while releasing revenue growth numbers that highlight that the AI lab now requires infrastructure on a scale that seemed unimaginable two years ago. The agreement, announced on April 6, 2026, gives Anthropic access to approximately 3.5 gigawatts of Google tensor processing unit (TPU) capacity through Broadcom starting in 2027, building on the 1 gigawatt already supplied to the company in 2026.

Krishna Rao, Chief Financial Officer of Anthropic, described it as “our most significant computing commitment to date,” representing the company’s continuation of the agreement.a disciplined approach to infrastructure scaling.” The majority of the new capacity will be located in the United States, expanding Anthropic’s commitment to invest $50 billion in America’s AI computing infrastructure by November 2025.

Three sides, one infrastructure layer

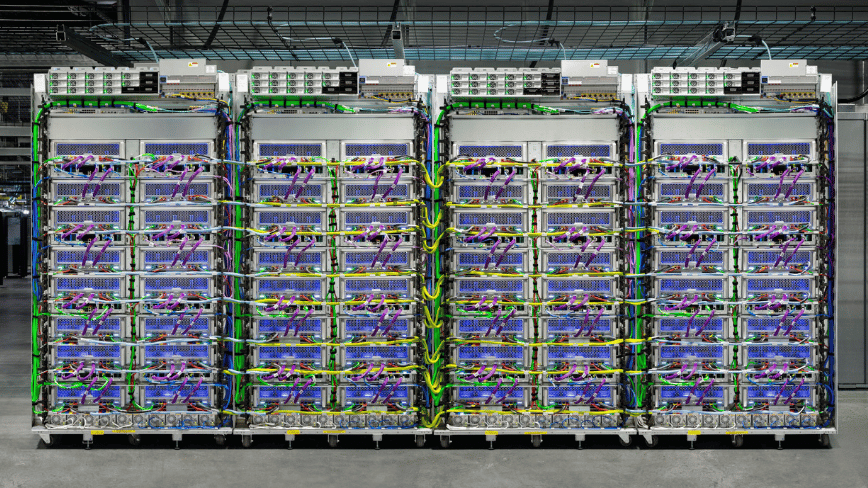

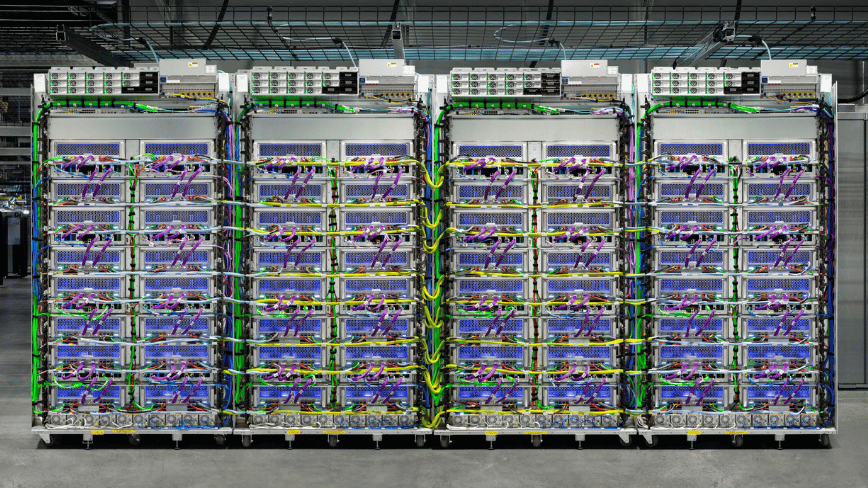

The announcement is as much about Broadcom as it is about Anthropic or Google. Under the new agreement, Broadcom acts as an intermediary layer between Google’s custom silicon and Anthropic’s training and inference workloads. In parallel, Broadcom signed a separate long-term contract with Google to design and supply next-generation custom TPU chips, and a supply assurance contract to provide networking and other components for Google’s next-generation AI data racks through 2031.

This makes Broadcom an increasingly indispensable node in the AI infrastructure graph. The chip maker, led by CEO Hock Tan, doesn’t build AI models; it builds the silicon and interactions on which AI models are built. Broadcom shares rose nearly 3% in extended trading on the announcement, a reaction that reflected investor appetite for companies at the physical layer of the AI stack. Mizuho analysts, led by Vijay Rakesh, estimated that Broadcom would record $21 billion in AI revenue from Anthropic in 2026 alone, rising to $42 billion in 2027, figures that, even as projections, indicate the financial weight of the work being done.

Broadcom first hinted at the extent of its Anthropic connection in September 2025, when Hock Tan revealed during an earnings call that a mystery customer had placed a $10 billion order for custom TPU racks. In December 2025, it confirmed that the client was Anthropic, and that an additional $11 billion order was coming. The April 2026 announcement is the third act of the same story: a partnership that ended a $21 billion commitment to multi-gigawatt infrastructure with a specific delivery schedule.

Revenue and customers: the numbers that drive infrastructure

The settlement agreement is only understandable in light of Anthropic’s commercial growth. The company says its revenue is on track to exceed $30 billion by the end of 2025, up from about $9 billion. This trajectory – a more than three-fold increase in roughly three months – is the result of Anthropic’s compounding enterprise sales drive, which has accelerated dramatically since closing its Series G funding round on February 12, 2025. $380 billion valuation led by GIC and Coatue and co-led by DE Shaw Ventures, Dragoneer, Founders Fund, ICONIQ and MGX.

When the G Series closed, Anthropic reported that more than 500 business customers spent more than $1 million on an annual basis. According to the April announcement, this number has doubled to over 1,000 in less than two months. The rate of enterprise adoption is a proximate cause of computing expansion: more revenue requires more inference capacity, more inference requires more training compute, and more training compute requires more gigawatts.

Claude’s multi-cloud architecture

What sets Anthropic’s infrastructure approach apart from many of its peers is its clear multi-vendor chip strategy. Claude trains and provides services on three hardware platforms: Amazon’s Trainium chips, Google’s TPUs, and Nvidia GPUs. Anthropic says Claude is the only frontier model available across all three major cloud platforms, AWS, Google Cloud and Microsoft Azure, a claim that makes both commercial and technical sense.

The multi-vendor position gives Anthropic both strength and negotiating leverage. If capacity is limited on any platform, workloads may vary. If a chipmaker experiences a supply disruption, export controls or price pressure, Anthropic will not be exposed to the full force of that shock. The strategy has a precedent: Microsoft’s own AI models In Microsoft’s case, the hedging reflects a similar instinct to hedge against single-vendor dependence, although it is against a partner rather than a hardware supplier.

AWS connections remain the foundation. By the end of 2024, Anthropic has named Amazon as its primary cloud and training partner, with total Amazon investment reaching $8 billion. Project Rainier, an Anthropic supercomputer group in Indiana that runs about 500,000 Amazon Trainium 2 chips, is expected to scale to more than one million Trainium 2 chips by the end of 2025. Google’s connections now stretch to multi-gigawatt scales, not 2,027 times that scale through the new Broadcom deal.

US infrastructure commitment

The April deal is clearly intended as an extension of Anthropic’s November 2025 on-premises infrastructure commitment: a $50 billion commitment for America’s AI computing infrastructure, originally developed in partnership with U.K.-based neocloud operator Fluidstack, with data center sites in Texas and New York. Based in the US, it expands this footprint to 2027.

This internal emphasis is not accidental. The Trump administration’s AI Action Plan has clearly targeted US computing as a strategic priority, and Anthropic, like its peers, has positioned its infrastructure investments accordingly. Whether this alignment reflects a sincere strategic conviction or a tactical regulatory posture—or both—the practical effect is the same: a significant portion of the world’s next-generation AI training capability is embedded in American geography.

What does consensus computing say about the arms race?

The Anthropic-Google-Broadcom announcement is a data point in a pattern that has been building for 18 months. $40 billion bridge loan to fund SoftBank’s OpenAI commitment reflected the same basic dynamic: AI labs have grown so fast that their computational demands now exceed what can be financed from revenue alone, requiring financial engineering on a scale once reserved for infrastructure utilities. Meta’s $27 billion infrastructure deal with Nebius represents parallel logic at the hyperscalar level.

The computing arms race is also reshaping how AI companies manage their relationships with services built on their models. Anthropic noticed this: the company recently moved restrict access to Claude through certain third-party frameworksA decision that shows the cost dynamics of frontier model savings are forcing AI labs to make tough choices about which use cases they subsidize and publicly price.

The trajectory for Broadcom is simpler: the chipmaker, which two years ago was not widely discussed in the context of artificial intelligence, is now the load-bearing element of the infrastructure built and served by two of the world’s most consistent models of artificial intelligence, Google’s Gemini and Anthropic’s Claude. That position, bolstered by a new multi-gigawatt deal for Google’s proprietary silicon and Anthropic’s access to TPU through 2031, is the real story beneath the headline numbers. Nvidia remains the dominant force in AI accelerators and firms like it Nvidia’s enterprise AI platform continues to expand its scope. But Broadcom’s rise as the dedicated silicon partner of choice for hyperscale AI computing is one of the defining changes in the semiconductor industry this decade.