Whether we like it or not, the age of agent AI is upon us. What started as an innocent Q&A joke with ChatGPT in 2022 turned into an existential discussion about job security and the rise of machines.

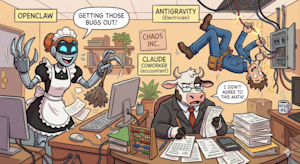

Recent fears of reaching artificial general intelligence (AGI), Claude Cowork et OpenClaw. After playing with these tools for a while, here is a comparison.

First, we have OpenClaw (formerly known as Moltbot and Clawdbot). Exceeding 150,000 GitHub stars within days, OpenClaw is now deployed on local machines with deep system access. It’s like a robot “servant” (for Irona Richie Rich fans, for example) that you give the keys to your house. He has to clean it up and you give him the necessary autonomy to take action and manage your stuff (files and data) as he pleases. The whole point is to accomplish the task at hand – inbox setting, auto-replies, content curation, travel planning, etc.

Next we have Google Anti-gravitya coding agent with an IDE that accelerates the path from early to production. You can interactively create complete software projects and change specific details on individual instructions. It’s like having a small developer who can not only code but also build, test, integrate and solve problems. In the real world, it’s like hiring an electrician: They’re really good at a certain job, and you only need to give them access to a certain item (your electrical junction box).

Finally, we have the mighty Claude. The release of Anthropic’s Cowork, which features artificial intelligence agents to automate legal tasks such as contract review and NDA triage, led to a sharp drop in legal technology and software-as-a-service (SaaS) stocks. SaaSpocalypse). Claude has been a chatbot anyway; now has domain knowledge for specific areas such as law and finance with Cowork. It’s like hiring an accountant. They know the domain inside out and can complete taxes and manage invoices. Users provide private access to highly sensitive financial details.

Make these tools work for you

The key to making these tools more effective is to give them more power, but that increases power risk of abuse. Users must rely on providers such as Anthorpic and Google to ensure that agent instructions do not harm certain sellers, leak information, or provide an unfair (illegal) advantage. OpenClaw is open source, which makes things difficult because there is no central governing body.

While these technological advancements are amazing and meant for the greater good, it only takes one or two negative incidents to cause panic. Imagine a rogue electrician connecting the wrong wire and frying all the circuits in your house. In an agent scenario, this could be injecting the wrong code, crashing a larger system, or adding hidden flaws that aren’t immediately discovered. Cowork may miss out on key savings opportunities when doing user taxes; on the flip side, this can include illegal deletions. Claude can do unimaginable damage when he has more control and power.

But in the midst of this chaos, there is a real opportunity to take advantage. With the right guardrails in place, agents can focus on specific actions and avoid making random, unaccountable decisions. The principles of responsible AI – accountability, transparency, reproducibility, security, privacy – are extremely important. Logging agent steps and human validation is absolutely essential.

Also, when agents are dealing with many different systems, it is important that they speak the same language. Ontology becomes very important to track, trace and record events. A shared domain-specific ontology can define a “code of conduct”." This ethics can help manage the chaos. Combined with a shared trust and distributed identity framework, we can build systems that enable agents to do truly useful work.

When implemented correctly, an agent ecosystem can greatly offload the human “cognitive load” and allow our workforce to perform high-value tasks. People will benefit when agents engage in worldly affairs.

Dattaraj Rao is an innovation and R&D architect at Persistent Systems.