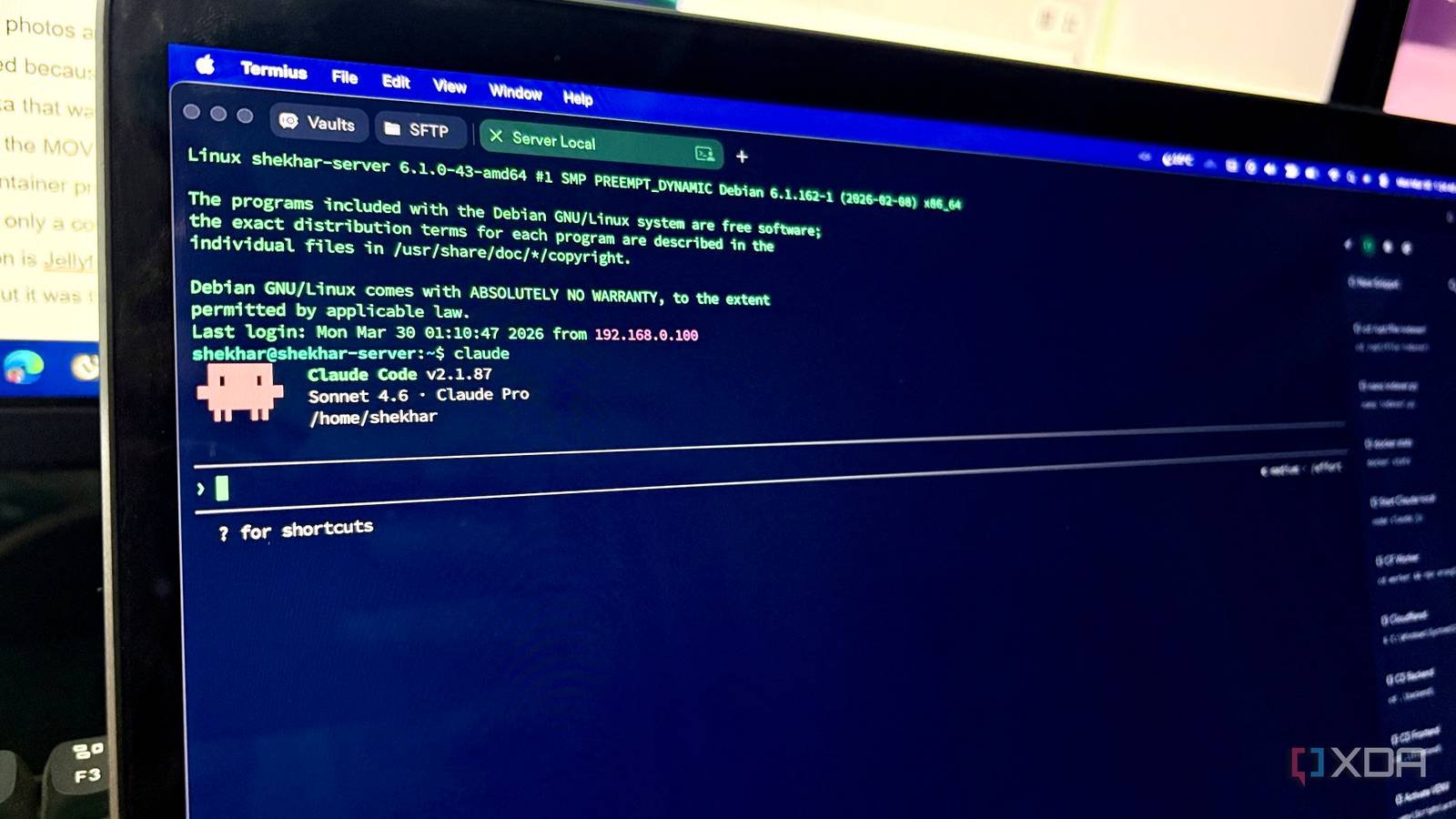

Most developers have been Using Claude Code I didn’t spend much time thinking about what the agent AI was doing with all the context it collected. That includes me. The tool’s usefulness comes from its deep access to everything in your environment, including files, running services, and installed packages. This is how it provides relevant answers when you have a question about your system.

In March 2026, Anthropic accidentally released a version of Claude Code that exposed hundreds of thousands of lines of source code. The leak revealed a number of unreleased and upcoming features, including a Tamagotchi-style pet. This is what most people find interesting, but it also confirms what data Claude Code collects from your machine and what it does with it.

It collects more than your hints

The data profile goes deeper than you might expect

I already expected that Anthropic saved every command I sent to the Claude Codex. No one should be shocked by this. The disturbing part of disclosure is that it also downloads the contents of any file that Claude Code reads. As expected point the tool at your codebase and system files to get its help, meaning users send a lot of data to Anthropic when using the tool for its intended purpose. To clarify, this is not harmless file metadata, but the actual content of your files. This information may be retained by Anthropic for up to seven years in some cases.

The leaked source also confirms that the downloaded session information includes user ID, session ID, account UUID, org UUID, email address, software version, platform, terminal type, and active feature flags. Since the user’s ID is passed along with the uploaded file content, it is reasonable to assume that the user’s files are associated with their user ID. In other words, Anthropic stores a lot of each user’s data and knows exactly who owns what.

Claude Code also has a “memory” feature. This is the tool’s ability to store relevant information about your project so that it can recall it across sessions. It keeps records of your settings, preferences, and conventions, so you don’t have to relearn your environment every time you open a new session. This is a useful feature, but all of this context resides on Anthropic’s servers for it to work.

Background features no one told you about

KAIROS and sleep mode are more interesting than they sound

The leaked code shows a few unreleased features that give us some insight into the future of Claude Code. KAIROS is the name of a daemon (a process running in the background) that allows the user to run Claude Code without ever looking at a terminal. It can fix bugs and run tasks while you’re away, and send push notifications when it needs your attention.

“Sleep mode” is a background process that runs when Claude Code is idle. It’s essentially a mode where the model can reflect on past interactions, spot patterns, and understand your project. This is actually a neat idea; the same kind of perspective you get by walking away from a problem and coming back to it.

These features sound really nice, but they are fundamentally different What Clau Code currently offers. Both of these new features will work in the background and without user intervention, bringing a new level of autonomy to the agent tool that not everyone is comfortable with. Neither of these are available yet, but given how much of the supporting code has been revealed, both are in development.

None of this was a secret

Anthropic’s documentation already covers this

All data practices resulting from the leak do not conflict with anything in Anthropic’s terms, which users must agree to before using Claude Code. Most users aren’t going to read terms and conditions pages, especially when experiences are scattered across different policy and security pages. A leak that makes headlines will do more to inform users of its information than any terms page.

Anthropic’s data retention period ranges from 30 days to seven years. It all depends on the plan level you subscribe to and whether any security flags are applied to your account. In most cases, deleting a conversation will delete data from Anthropic’s servers for 30 days. If you choose to allow Anthropic train models based on your data, they will retain it for up to five years. Security flags override this behavior, the longest possible retention period is seven years. Claude’s papers use vague expressions, but Concretio’s blog post clearly details its retention policy.

Agent tools are inherently invasive

Anthropic published a list of environmental variables in response to the scope. Parameter CLAUDE_CODE_DISABLE_AUTO_MEMORY=1 disables and works with memory and telemetry write operations --bare completely removes mode memory and autoDream features. These were previously buried in the documentation and I hadn’t come across them myself, so I’d bet most other users hadn’t either.

The reality is that agent tools need deep access to your system or project to be useful. It’s a trade-off how much information are you willing to give the tooland this leak reveals how much you’ve already shared without realizing it.