If you’ve looked into running AI models on your hardware, you’ve likely come across Ollama. It’s the default recommendation in many different places, most YouTube tutorials guide you, and it’s the tool that many self-hosting guides take as their starting point for local inference. And for good reason, getting a model running with Ollama is as easy as typing “ollama run gpt-oss-20b” and waiting. It’s the Docker of native LLMs, and given that some of the people behind Ollama come from the Docker world, the comparison is no coincidence.

The convenience of having it comes at a price, and once you understand what’s going on under the hood, it’s hard to justify using it over the alternatives. It’s slower than it needs to be, it makes choices you can’t easily undo, and the project itself is headed in a direction that will worry anyone interested in open source software. I’ve been running local LLMs for a while now and moved away from Ollama a long time ago for most of my projects.

And it hides the settings that will fix it

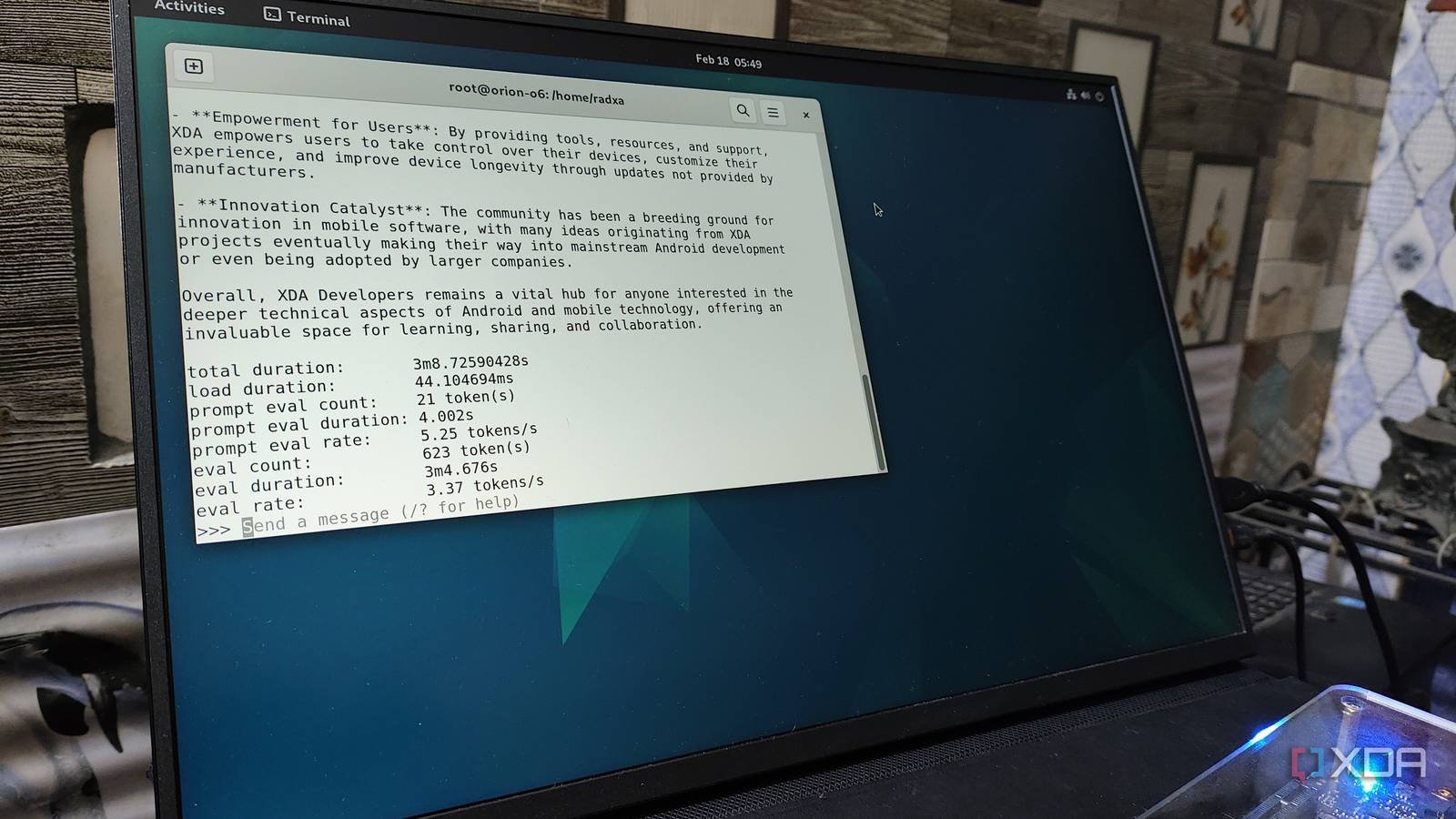

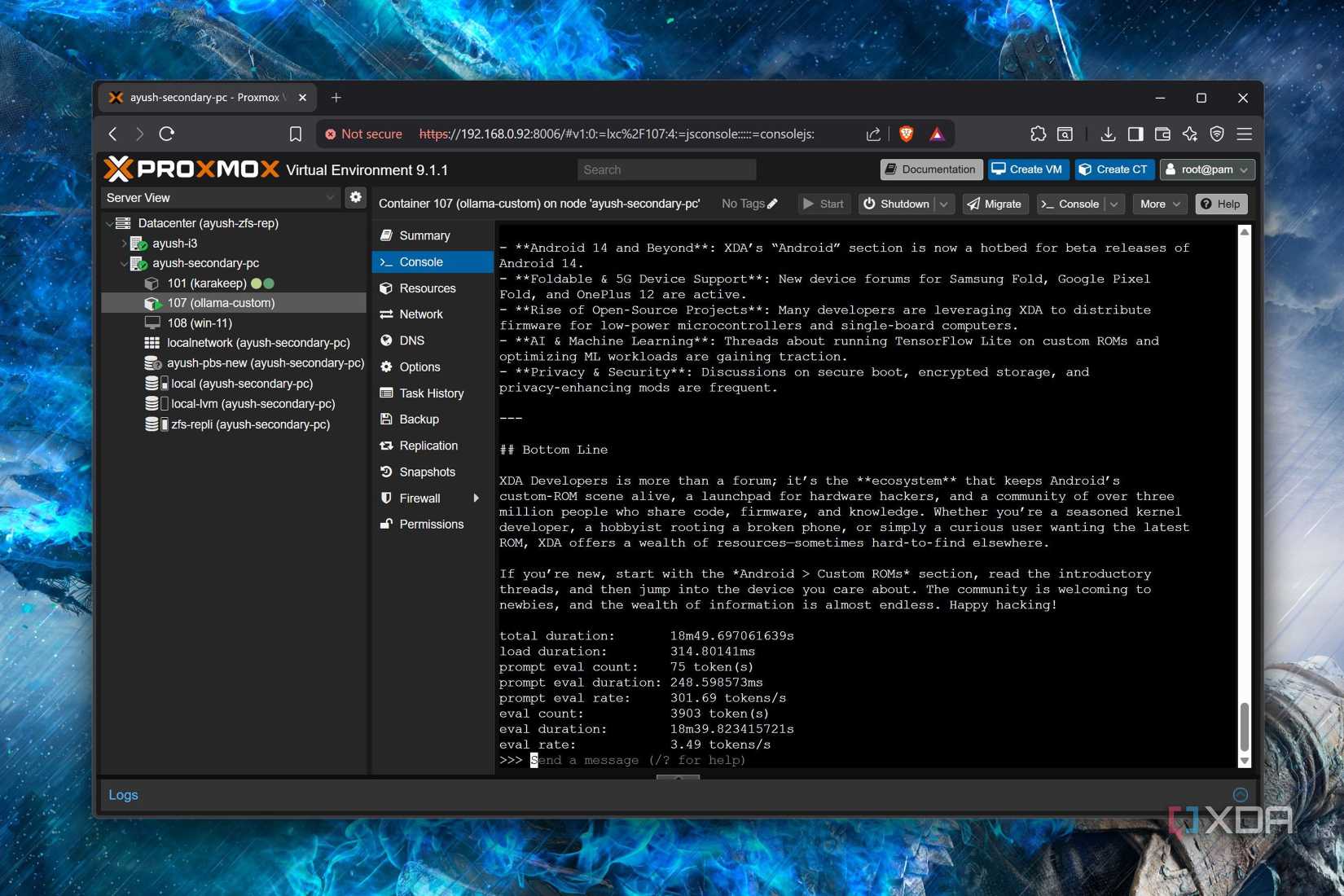

The most immediate problem with Olama is performance. Numerous community benchmarks and developer reports have shown that the program works the same Produces a model through being fewer tokens per second compared to running it directly via llama.cpp and that’s the problem keeps repeating. Sometimes it’s a pretty big difference, and it’s a noticeable gap you can feel when you’re waiting for the output while using it.

Part of the problem is with Ollama’s defaults. For example, the context window will default to 4096 tokens for most peopleand it used to be even lower. It dynamically sets the default context length depending on how much VRAM is available, but dynamically built context windows only fire on GPUs with more than 24GB of VRAM. Even Ollama’s own documentation says you should use 64,000 contexts. minimum for “highly context-intensive tasks such as web browsing, agents, and coding tools.”

This is quite small when looking at modern models that use significantly less VRAM for KV cache. Gemma 4 supports 128K or 256K contexts depending on the model, and newer architectures can reduce the long context memory load enough that the 4K default now looks worse than how these models are actually used.

If not set manually The num_ctx-i environment variable, via a command or the Ollama API, can become a bottleneck in Ollama long-context workloads, especially after instructions start to exceed the narrow defaults it sends. The problem is that when Ollama sits behind another tool, it’s not particularly clear, especially for beginners, which Ollama is supposed to help.

Moreover, Ollama’s abstraction layer adds overhead that is not present in raw llama.cpp. The Nullmirror team documented the switch from Ollama to llama.cpp and found consistent performance improvements with no quality changes in every model they tested. Their results were quite clear, as they stated that throughput and control were more important than the convenience offered by Ollama.

The trust issue is harder to ignore

It’s hard to name a thing, but it was a choice

Performance is a trade-off you can make choose to accept, but trust is different and Ollama slowly loses over time.

When DeepSeek released the R1 model family in early 2025, Ollama listed smaller distilled versions, such as DeepSeek-R1-Distill-Qwen-32B, in its library as simply “DeepSeek-R1”. This caused a lot of confusion. Social media was full of people claiming to be running “DeepSeek-R1” on their consumer hardware, when in fact they were running smaller distilled variants that didn’t behave like the full 671 billion parameter model. Ollama knew the difference and they chose to hide it anyway, probably because “DeepSeek-R1” is doing more downloads than “DeepSeek-R1-Distill-Gwen-32B”. And now “ollama run deepseek-r1” 8B It will run a distilled variant derived from Qwen3.

Then there is the infrastructure itself. Ollama stores models in its own registry format using hash filenames, which makes it surprisingly difficult to take the models you’ve downloaded and use them with another inference engine. If you’ve been drawing models through Ollama for months, you can’t just point LM Studio or llama.cpp at those files without doing some extra work. This is a form of vendor lock-in that most people won’t notice until they try to leave. You can import your GGUFs into Ollama by creating a Model file, but importing your Ollama models to other platforms is not that easy.

About a year ago, Ollama also moved away from using llama.cpp for inference. They built a custom application on top of ggml, a low-level library that llama.cpp itself uses. Their stated reason was stability: llama.cpp is fast and breaks things, and Ollama’s enterprise partners need reliability. This is a fair argument on paper. In practice, however, their custom backend reintroduced bugs that llama.cpp had fixed years ago. Community members pointed out broken structured output support and other regressions that were simply not present in the upstream llama.cpp.

There have also been complaints about the MIT license, where binary distributions of Ollama have been accused of not properly crediting the authors of llama.cpp, which is built on top of the project. To make matters worse, its GUI application was not part of the main GitHub repository at launch, its license was unclear, and its source code was not available. The software code is in the repository nowbut it only makes the previous presentation look worse, not better. If your project trades on being open source, you can’t be vague about what’s open and what’s not when you start.

Ollama, to their credit, tried to give public acknowledgment where appropriate, and also stuck a “Thank you” note at the end of a published blog post. when this controversy first arose.

Ollama is a Y Combinator-backed startup with venture capital funding and a growing team. None of this is inherently bad, but it does mean that the project’s incentives aren’t purely community-driven. A confusingly launched desktop app, a friction-filled model registry, and a move away from llama.cpp all point in the same direction.

Alternatives are easier than you think

You don’t need to be Ollam to keep things simple

The tools built on top of Ollama are directly available and in most cases not too difficult to set up, and llama.cpp is an obvious starting point. This is the C++ inference engine that most of the native LLM world depends on, and it gives direct control over everything that Ollama abstracts. You get full control over OpenAI-compliant API server, context windows, and sampling settings, and consistently better throughput. If you want more performance, ik_llama.cpp is a fork that takes CPU and multi-GPU performance even further, with three or four times speed improvements in some multi-GPU configurations.

Plus, if you’re running multiple models and want automatic Ollama-style swapping, lama-swap handles that with a single YAML configuration file. It sits in front of llama.cpp and routes requests to the correct model, rotating models up and down as needed. llama.cpp also has its own web GUI that you can access through your browser to interact with it.

If you want a GUI, LM Studio supports any GGUF model and exposes all optimization options of llama.cpp through a clean interface. It has no proprietary model format, no registry lock, and you can use the same model files with any other tool. On top of that, koboldcpp is another llama.cpp based option with granular control over each selection parameter and built-in web UI.

vLLM is another popular option and is what I use With Claude Code on ThinkStation PGX. If you’re serving models to multiple users or running agent workflows, it’s the best choice because it handles continuous collection and PagedAttention, which makes a real difference in intensive workloads. And if you want a functional polished front end any From these backends, Open WebUI easily connects to all of them.

None of these tools take more than a few minutes to set up. The idea that Ollama is the only viable option for beginners doesn’t hold true after actually trying the alternatives, as many have experienced the ease of use pioneered by Ollama years ago.

Ollama served a purpose when local LLMs were new and the tools around them were crude. It’s just not like that anymore. The alternatives are faster, more transparent, and don’t come with the baggage of a company making product decisions that prioritize control and product packaging over the clarity and interoperability that made the project popular. If you’re still using out of habit, it may be time to pull the plug.