Ever since I went down the local LLM rabbit hole, I’ve loved revitalizing old computers by turning them into reliable AI workstations. With the right tweaks, I’ve even managed to launch powerful LLMs that can compete with their cloud-based counterparts on something obsolete. 10 year old rig. However, most of my hardcore LLM experiences involve full-fledged x86 gaming systems with dedicated graphics cards and excess RAM.

However, a Raspberry Pi 5 can run up to a 4B model without buckling under extra load, making it a surprisingly decent choice for hosting. deployment models and simple chatbots. But since I want to run models that wouldn’t otherwise fit into this SBC, I thought I might try assembling some spare boards. And it’s probably one of the most cursed projects I’ve ever worked on (but it still has some merit).

Creating the llama.cpp cluster was not that difficult

I had to design the engine to get results on both systems

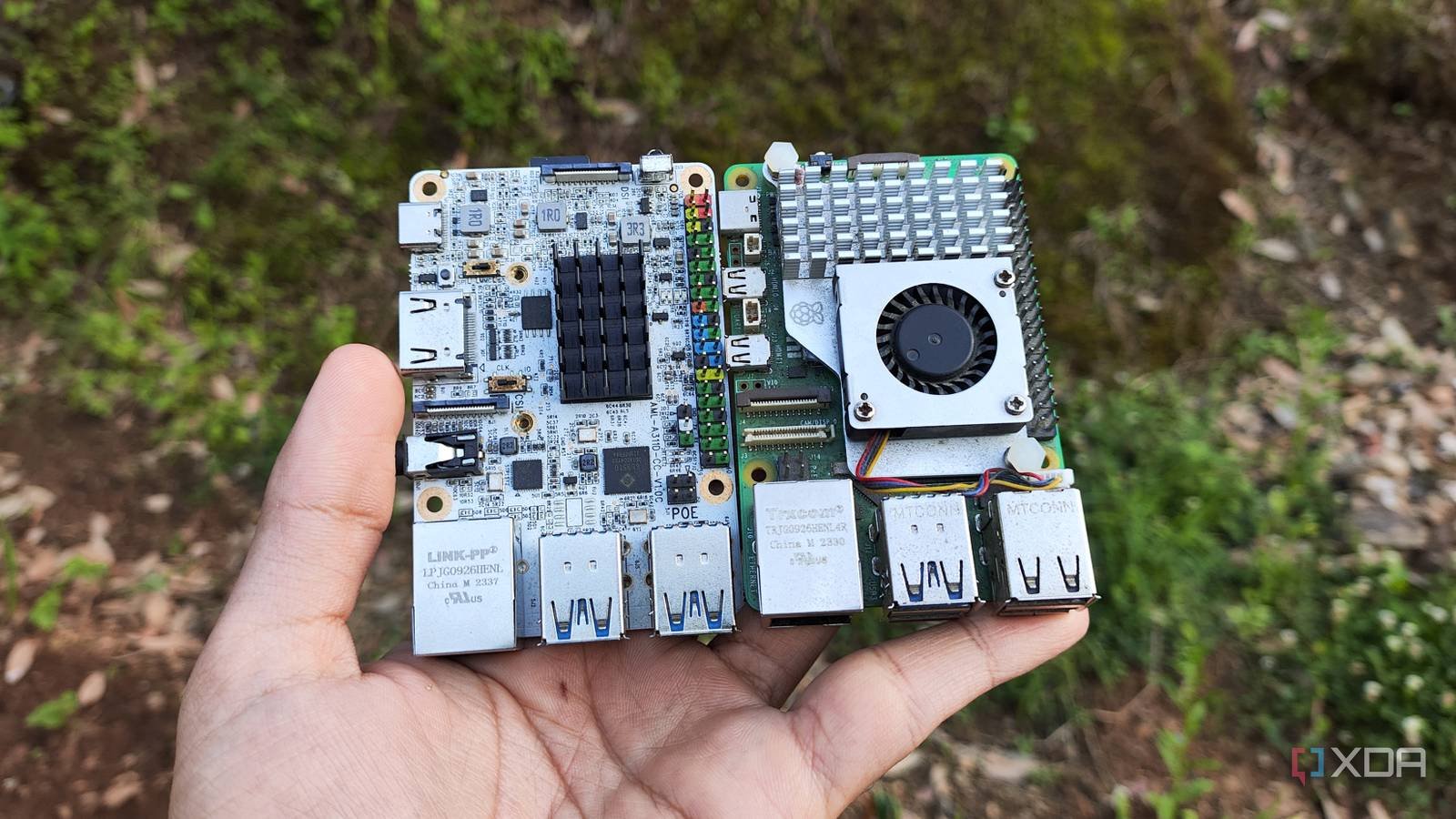

Starting with the SBCs I want to use guinea pigs For the participants of this project, I initially planned to spin up a cluster of three devices. However, I quickly realized that most of my ARM boards were already separated from the Raspberry Pi 5 and engaged in one experiment or another. Free Computer Altaand Le Frite as the only viable option. Unfortunately, the Le Frite is pretty weak for this project, and its USB 2.0 socket and 100M will complicate an already weak setup. So I went with a 2-node cluster with a Raspberry Pi 5 (8GB) and a Libre Computer Alta (4GB) and split the inference tasks between the two systems with an RPC backend in llama.cpp.

Fortunately, even though I had to compile the llama.cpp file from scratch, the installation process was much simpler than I expected. Once I armed both systems with a CLI distro (Ubuntu on the Alta and an older version on the Raspberry Pi OS Lite) and configured. openssh-serverI accessed them via PuTTY and installed the prerequisite packages by running it sudo apt install -y git build-essential cmake pkg-config. Then I cloned it with the llama.cpp repo git clone https://github.com/ggml-org/llama.cpp.git via to the newly created folder cd llama.cpp command. Finally, I created another folder named build-rpc through mkdir -p build-rpc before jumping into it and running the following commands to compile llama.cpp with RPC capabilities:

cmake .. -DGGML_RPC=ON -DCMAKE_BUILD_TYPE=Release

cmake --build . --config Release -j$(nproc)

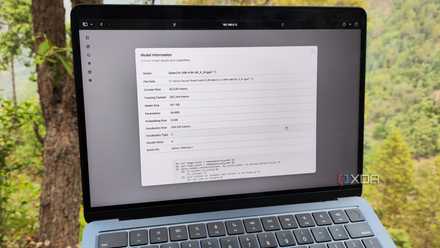

I ran because I wanted the Alta SBC to act as a secondary server unit ./bin/rpc-server -H 0.0.0.0 -p 50052 and allow the RPC server to remain active for some time. After using the SCP command to transfer some LLMs from my mainframe to a Raspberry Pi node, I ./bin/llama-server -m /home/ayush/models/Qwen3.5-2B-Q4_K_M.gguf –rpc 192.168.0.150:50053 –host 0.0.0.0 –port 8080 command and waited for it to finish loading the model.

It turned out that the performance of the cluster was worse than the stand-alone SBC

I blame slow network provisions for this bottleneck

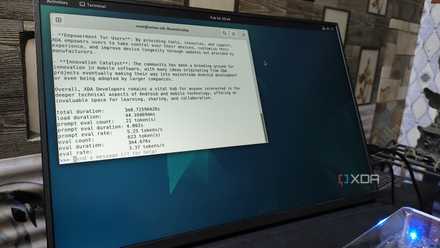

Since I’m using a fairly light Gemma 3 4B, I expected my cluster to perform slightly better than the Raspberry Pi. However, running a few commands through the llama-server’s web interface proved otherwise. And I’m not talking about complex instructions or inference tasks related to MCP servers. For something as simple as “tell me something nice”, the cluster will struggle to reach 2.20 tokens/second. So I rebooted the Raspberry Pi and ran the llama-server command again. Except I got rid of the — rpc flag this time. Sure enough, the inference engine was able to hit 4.37 t/s, almost twice as fast as the clustered setup!

In theory, the cluster should either hit higher token generation rates, or at least provide speed comparable to a Raspberry Pi-only setup. But when you factor network and storage bottlenecks into the equation, it makes perfect sense. You can see that both SBCs have 1GbE connectivity, which is a bit slow for high-speed AI inference tasks. Worse, I’d run out of SSDs in my home lab, so I had to make do with just microSD cards, which definitely contributed to the speed factor (or lack thereof). Consider that LLM operations are highly latency sensitive and it’s clear why my cluster performed terribly. I was about to call this project a failure and wrap things up here, but I wanted to try one last experiment before I kill the cluster…

But the cluster can run LLMs that would otherwise be too heavy for my RPi

Don’t just look at token generation rates

While its lackluster performance is a general buzz, my main goal behind this wacky project was simply to run large models that the Raspberry Pi couldn’t accommodate with just 8GB of RAM. So I restarted llama-server without the RPC flag and started increasing the model parameter size. Qwen 3.5 (9B) is where the llama-server crashed because the SBC could not accommodate the large model.

But when I ran it with the RPC flag pointing to Alta, llama-server was able to load LLM with relative ease. Just to satisfy my curiosity, I opened the web UI and started applying for LLM. Well, it definitely worked, even though it only generated 1.27 tokens per second. This is nowhere near the possible number for my productivity tasks and coding workloads. But it’s still somewhat useful for automated tasks like creating tags for bookmarks or OCR scanning documents, especially considering I can leave the SBCs running all day without worrying about power consumption.

And to be brutally honest, I realized to measure seconds per token instead of tokens per second. So a tick build speed of 1.27 t/s is somewhat surprising, especially since it’s a model that SBC couldn’t load in the first place. While I probably won’t use this cluster for SBC extraction tasks, RPC definitely sounds useful. In fact, I can use it for my current LXC-based LLM hosting workstations, which include full-fledged 10G NICs.